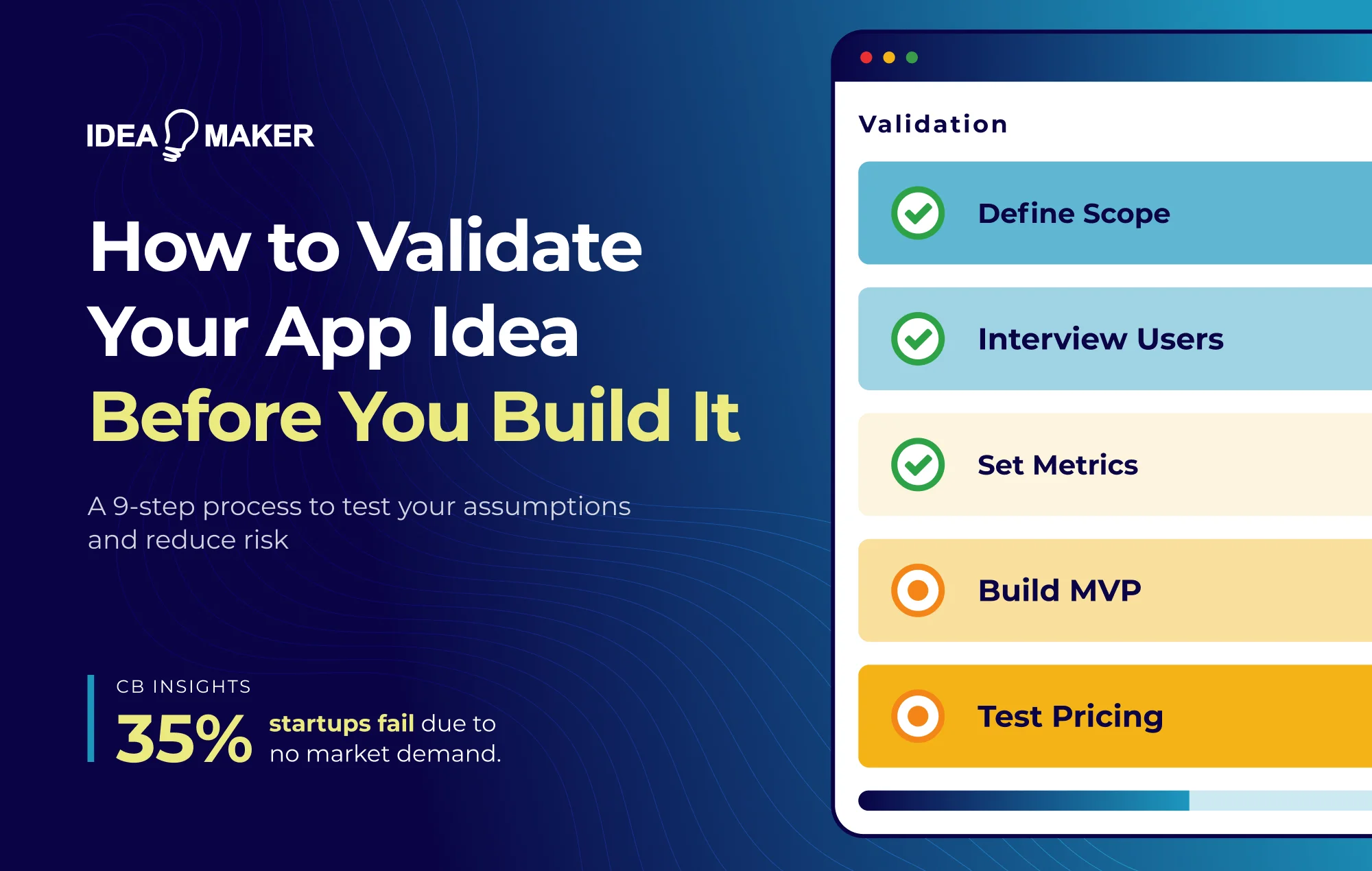

According to CB Insights, 35% of startups fail because there's no market need for their product - meaning over a third build something nobody actually wants. Building software is expensive, and the cost of building the wrong thing is even higher when you factor in time, opportunity cost, and the emotional toll of working on something that doesn't connect with users.

Validation is about systematically reducing uncertainty before committing significant resources. The purpose is to make evidence-based choices about whether to build, what to build, and who to build it for.

Let’s break down what validation really means and how to approach it step by step.

- Most founders think they have validated when they have not. A few encouraging conversations, a competitor analysis, maybe a survey with positive results. That is not validation.

- Start with the assumptions that could kill your idea. Is the pain real enough to drive action? Will users leave their current solution? Can you acquire them profitably? Will they pay a price that makes your business work?

- You do not need to build software to validate. Concierge MVPs, no-code tools, and fake-door tests tell you what you need to know before you write a line of production code.

- Validation does not stop at launch. You move from problem validation to retention and unit economics. The assumptions change, the rigor should not.

- We work with founders at this stage. If you are figuring out what to test first or whether your evidence is strong enough to move forward, that is exactly what we do at Idea Maker.

What Does App Idea Validation Really Mean?

Most founders have a version of validation that lives in their head - conversations with a few people who seemed excited, a competitor analysis that confirmed the market exists, maybe a survey with encouraging results. That's not validation. App idea validation is the process of testing whether your proposed solution addresses a real problem that people will pay to solve.

Validation separates what you believe from what you can demonstrate. Every founder believes their idea is good - otherwise they wouldn't pursue it. But belief isn't evidence. Validation produces evidence: recorded interview transcripts, conversion data from landing pages, pre-orders, or demonstrated willingness to pay.

What validation proves:

- The problem exists and matters enough for people to seek solutions

- Your target users can be reached affordably

- Users would switch from current solutions to something better

What validation doesn't prove:

- That you'll succeed (execution still matters)

- That your specific implementation is correct

- That market conditions will remain favorable

The trap many founders fall into is assuming a truly good idea will sound brilliant the first time someone hears it. In reality, many successful products emerged from ideas that seemed unremarkable or even questionable initially - what made them work wasn't the pitch, but whether they solved a problem people actively experience.

Why App Idea Validation Is Critical Before Development

Development typically consumes 40-55% of a mobile app project budget. For a moderately complex app, that can mean $80,000 to $150,000 or more before you have any users. If the core premise is flawed, that investment yields nothing except lessons you could have learned for a fraction of the cost.

So, building without validation creates three categories of risk.

- Market risk means you're solving a problem that doesn't exist or isn't painful enough.

- Usability risk means users can't figure out how to get value from your solution.

- Technical risk means you can't actually build what you've promised at the cost and timeline you need.

Validation directly addresses all three. User interviews tell you whether the problem is real. Prototypes and landing pages test whether your proposed solution resonates. Technical spikes confirm feasibility before full development begins.

Beyond risk reduction, validation also changes your relationship with investors and stakeholders. In fact, "We believe users want this" is very different from "We interviewed 30 users, and 23 described this exact problem unprompted."

How to Validate Your App: Key Steps

So, where do you actually start? The process below is sequenced to find the biggest risks first - so you're not spending weeks confirming easy assumptions while the ones that could kill your idea remain untested.

1. Define the Smallest Testable Version of Your App Idea

Before you can test an idea, you must define its boundaries. Avoid feature creep during the definition phase.

Answer these questions:

Who is this for? Not "everyone" but a specific segment. "First-time managers at tech companies with 50-200 employees who struggle to give effective feedback" is testable. "People who want to be better managers" is not.

What specific problem does it solve? Describe from the user's perspective, including when it occurs and what happens if it goes unsolved.

How do they solve it today? Before conducting interviews, map what already exists. Search the App Store and Play Store for apps in your category, document their core features, pricing, and positioning, then read user reviews to find recurring complaints and unmet needs. This independent research shows what you're competing against and what switching costs you need to overcome - before you even talk to a potential user.

What outcome do you promise? Frame this in terms the user cares about - time saved, money earned, stress reduced.

What's your unfair advantage? Domain expertise, proprietary technology, unique distribution, or insights others don't have.

The output should be a one-page problem statement you can test against real users.

2. Identify the Riskiest Assumptions First

Every startup idea is built on assumptions, and some, if wrong, would invalidate the entire concept.

The danger is validating what's easy rather than what matters. Confirming that "people use smartphones" feels productive but teaches nothing useful.

So how do you decide which assumptions to test first? Rank each on two dimensions: impact (how catastrophic if wrong) and uncertainty (how confident you actually are). Assumptions that score high on both are where you start.

High-Risk Assumptions that Kill App Ideas

Rank assumptions by impact (how bad if wrong) and uncertainty (how unsure you are). Start validating where both are high.

- The problem is painful enough to matter. Many problems exist but don't rise to action. Test through user interviews - if fewer than half describe the problem unprompted or show emotional intensity, the pain likely isn't acute enough.

- Users will change existing behavior. Switching costs are real - muscle memory, data lock-in, existing relationships. Probe this by asking what users have already tried and why they stopped.

- You can acquire users affordably. Customer acquisition cost relative to lifetime value determines whether the business works. Run small paid ad experiments ($200-500) before building - if you can't get clicks at reasonable costs, scaling will be brutal.

- The pricing model is viable. Willingness to pay at a price that supports your business is separate from willingness to pay at all. Test with fake-door pricing pages or by asking what users currently spend on similar solutions.

- The solution is technically feasible. Some ideas require technology that doesn't exist yet. Validate by scoping the core technical challenge with an engineer or building a quick proof-of-concept for the riskiest component.

3. Validate the Problem Through User Interviews

Surveys scale, but they presume you already know the right questions. For early validation, interviews are more valuable because they let you follow unexpected threads and catch nuances that structured questions miss.

How many interviews are enough? 5-6 interviews per segment show you the most major themes. Patterns emerge around interview 8-12, with diminishing returns after 15-20.

Who to talk to: Start by building a simple persona - their role, the context in which they experience the problem, their current workaround, and whether they're the end user, the decision-maker, or both. Then target people who match that persona and have experienced the problem recently. If someone other than the end user makes purchasing decisions, talk to them too. Avoid friends, family, and anyone who might tell you what you want to hear.

Questions That Show You Real User Behavior

Once you're in the interview, anchor on past behavior rather than hypothetical futures. These three questions help you filter out aspirational feedback and identify real pain points:

- Walk me through the last time you dealt with this issue. Identify actual behavior rather than hypothetical preferences.

- What have you tried to solve this? Shows whether they've actively sought solutions.

- What did that cost you in time, money, or stress? Quantifies the pain.

Signs of Strong Problem Validation

Weak signals include polite enthusiasm, agreement with leading questions, and feedback that sounds too close to your own framing. What you're looking for instead:

- Multiple users describe the same pain point unprompted

- Users have built workarounds or spreadsheets

- They're already spending money trying to solve it

- They ask to be notified when your solution is ready

4. Define Clear Pass/Fail Validation Metrics

"Users loved it" isn't a validation metric. Validation requires measurable thresholds defined before you run the experiment.

Why "positive feedback" is misleading: People are polite. The gap between stated preference and actual behavior - the "say-do gap" - is huge in product research.

Define validation criteria before seeing results. "If 8 of 10 interview subjects describe this problem unprompted, we proceed. If fewer than 5 do, we pivot."

Example Validation Metrics

| Stage | Metric | Validation Threshold |

| Problem interviews | Problem described unprompted | 70%+ |

| Landing page | Email signup conversion | 10%+ |

| Waitlist | Pre-order conversion | 5%+ |

| Early access | Week 2 retention | 40%+ |

Pre-committing to thresholds prevents confirmation bias. When you define success criteria before seeing results, you remove the temptation to rationalize weak data or move the goalposts after the fact. Write your thresholds down, share them with a co-founder or advisor, and hold yourself accountable to what you said would constitute a pass or fail.

5. Test Demand With a Landing Page

Before building a page, check whether people are already searching for solutions. Use Google Keyword Planner or Ubersuggest to see search volume for terms related to your problem. If nobody’s searching, that’s something you should take seriously.

A landing page tests whether your value proposition resonates with a cold audience.

What a validation landing page needs:

- A headline stating the promised outcome

- Brief explanation of how you deliver it

- Social proof if available

- A single call-to-action

Write outcome-driven messaging: Lead with what users get, not what you built. For example, like, "Ship projects 20% faster" than "AI-powered project management."

Build with Carrd, Webflow, or Unbounce, and track behavior with Google Analytics or Mixpanel.

Channels to Drive Early Traffic

- Paid ads: Run $200-500 on Google Ads or Meta Ads targeting your exact user segment. The goal is to test whether your messaging earns clicks from cold traffic.

- Niche communities: Find where your users already gather - Reddit, Slack groups, Discord servers, or industry forums. Share genuinely, ask for feedback, and link to your page where relevant.

- Direct outreach: Email or message 20-30 people who fit your persona. Ask for their reaction to your landing page, not a commitment to buy.

According to Unbounce’s 2024 analysis of 41,000 landing pages, the median conversion rate across industries is 6.6%; for validation, 10% or higher suggests strong resonance, while below 3% may signal messaging or targeting issues - though benchmarks vary by industry, with SaaS averaging around 3.8%.

6. Build a No-Code or Concierge MVP

Before looking at build options, it’s helpful to distinguish three pre-build artifacts that are often mixed up.

- Proof of Concept (POC): Tests whether the idea is technically feasible. Typically internal-only, built to answer "can we actually make this work?"

- Prototype: Tests navigation, design, and user flow with a non-functional mock. Does this make sense to users?

- MVP: A minimal but functional product released to test real market demand. Will people actually use and pay for this?

For validation, you're usually building toward an MVP - but you don't need code to get there.

Concierge MVP: You manually perform the core function to prove users value the output. This confirms the logic works before you hard-code anything.

No-Code MVP: Use platforms like Bubble, Glide, or Airtable to build functional interfaces without custom development. This tests the user journey at a fraction of traditional costs.

Concierge MVP examples:

Zappos founder Nick Swinmurn validated online shoe sales by posting photos from local stores on a basic website. When orders came in, he bought shoes from the store and shipped them himself - validating demand without inventory investment.

DoorDash began with a simple website featuring PDF menus and a Google Voice number in January 2013, with the co-founders initially handling all deliveries themselves. This hands-on approach allowed them to learn logistics and operational challenges before developing a scalable platform.

What you’ll learn by doing it manually:

Running things manually gives you insights you can’t get any other way. When you're personally fulfilling orders or delivering the service, you see exactly where users hesitate, what questions they ask that your interface didn't anticipate, and which steps take longer than you assumed.

DoorDash's founders discovered delivery timing and restaurant coordination challenges they never would have anticipated from a whiteboard - knowledge that shaped how they built their actual platform. You also get real unit economics: how long each task takes, what it actually costs to deliver value, and whether the margins can ever work at scale.

7. Test Pricing Earlier Than You're Comfortable With

Founders typically delay pricing conversations because rejection feels personal. This is a mistake. Willingness to pay at a viable price point is one of the highest-risk assumptions, and discovering nobody will pay $20/month is far cheaper to learn now than after you've built the product.

The problem is that interest and willingness to pay are not the same thing. Users who enthusiastically say they'd "definitely use" a free product often vanish when you attach a price. The only reliable signal is actual payment behavior.

Pricing Validation Techniques

- Fake-door tests: Show a price on your landing page and measure whether conversion rates change at different price points. This works best when you have enough traffic to see statistically meaningful differences - run the same page at $9/month for a week, then $19/month, and compare signup rates.

- Pre-sales: Collect deposits for a product that doesn't exist yet. This is the strongest signal because money has changed hands. Offer early access at a discount in exchange for payment now - users who pay before the product exists demonstrate genuine commitment.

- Value-based questions: In interviews, ask users what the problem currently costs them. When this goes wrong, what does it cost you in time or money? A solution that saves $10k annually can be priced differently than one that saves $100 - and their answer tells you where your ceiling is.

If none of these approaches get anyone to pay anything, that's not a pricing problem - it's a value problem

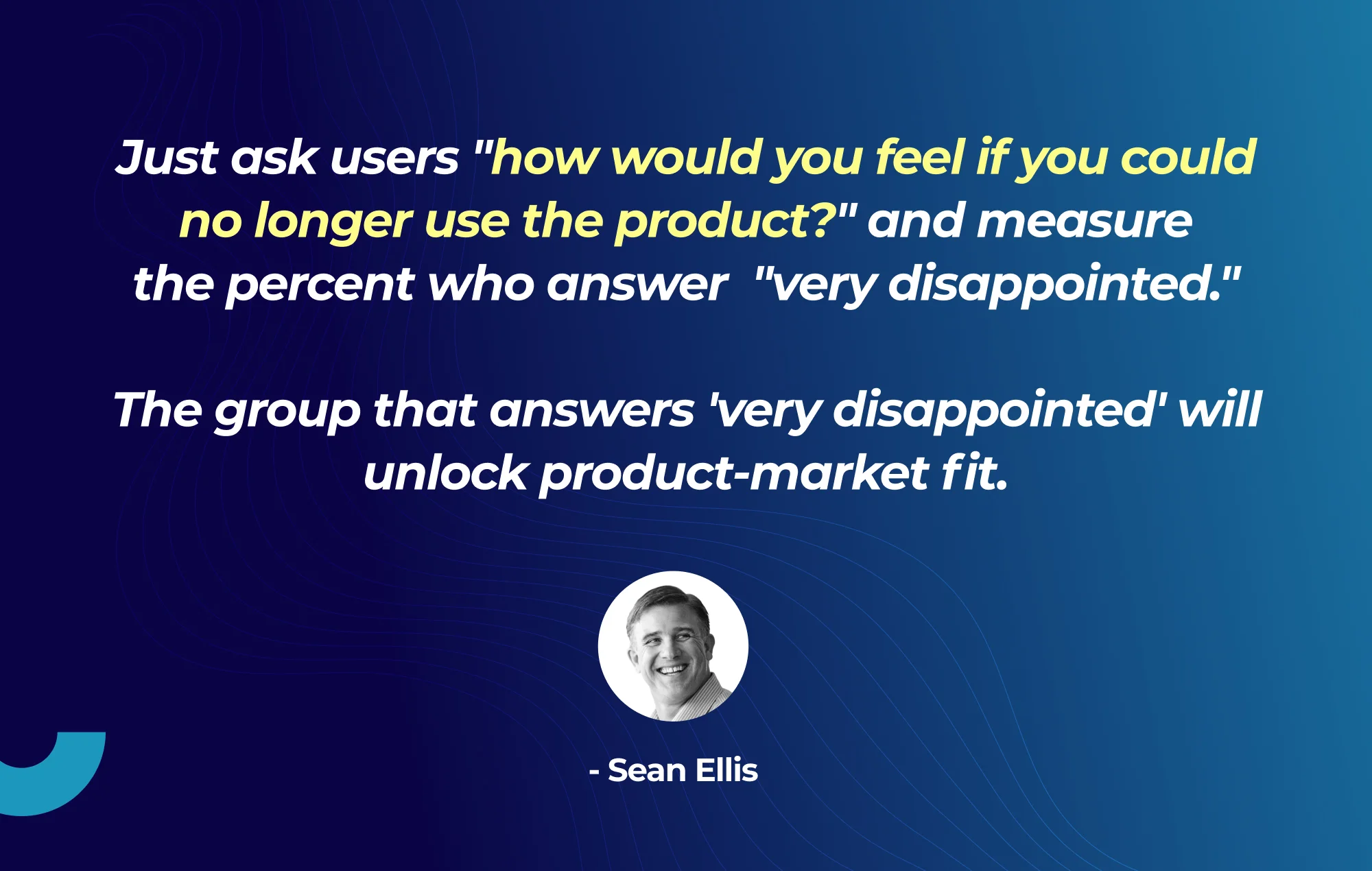

8. Focus on Retention Before Features

Early excitement often fades, and launch-day downloads don't equal product-market fit; the main metric is whether users return.

Track retention at day 1, day 7, and day 30. At pre-scale, this can be as simple as a spreadsheet tracking who logged in, manual check-ins via email, or basic analytics through Mixpanel or Amplitude.

As a rough benchmark, healthy early-stage products often see 40-60% day 1 retention, 20-30% by day 7, and 10-15% by day 30 - though this varies by category. If you're losing 80%+ of users within the first week, that's a signal worth investigating before adding more features.

Identifying "must-have" versus "nice-to-have": Ask users what they'd do if the product disappeared. Relief signals nice-to-have. Significant disruption signals must-have.

Sean Ellis, who developed the Product/Market Fit survey methodology, proposed that if 40%+ of users say they'd be "very disappointed" without your product, you've likely found fit.

9. Decide: Pivot, Persevere, or Pause

Validation is about acting on insights, not just collecting data. At some point, you have to make a call.

No single signal is definitive, but when multiple indicators align - users actively seeking your solution unprompted, organic word-of-mouth bringing new users, stable or improving retention, and unit economics trending toward positive - you have enough evidence to move forward with confidence.

If validation is weak across your target market but strong in a subset, narrow rather than expand. If interest is high but willingness to pay is low, test alternative framings before abandoning the concept entirely.

If you’ve tested across multiple user segments, tried different messaging, adjusted pricing, and still haven’t seen a meaningful signal after 8 to 12 weeks of focused effort, it’s time to pause and put your resources somewhere else.

Common Mistakes in App Idea Validation

When you're in the middle of validation - emotionally invested, short on time, hoping for good news - it's easy to take shortcuts in ways that feel reasonable but undermine the whole exercise.

- Validating with friends, family, or internal teams only: These people want you to succeed and will unconsciously filter their feedback, emphasizing positives and downplaying concerns. Test with strangers who have no stake in your success.

- Confusing interest with commitment: Someone saying they'd use your product means nothing. Signups, deposits, and completed purchases mean everything. Measure what people do, not what they say.

- Avoiding pricing conversations: Founders delay pricing because it feels premature. It's not. Learning that users won't pay what you need to charge costs far less before you build than after.

- Ignoring negative or lukewarm signals: One enthusiastic user doesn't outweigh nine indifferent ones. If you're searching hard for positive signals, the answer is already clear.

- Falling in love with the solution instead of the problem: Attachment to a specific implementation makes pivoting psychologically difficult. Stay attached to the problem; stay flexible on how you solve it.

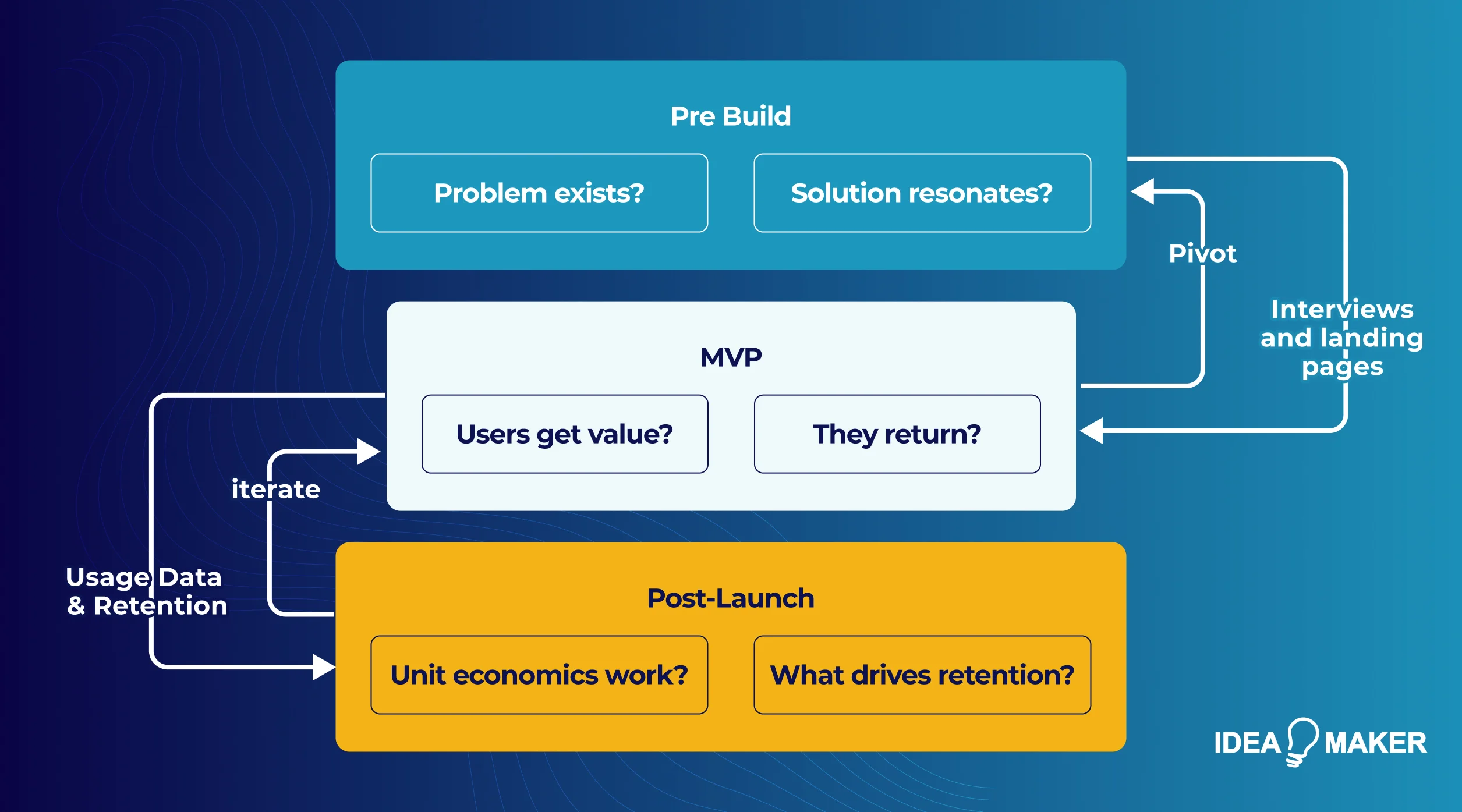

How App Idea Validation Fits Into the Product Development Lifecycle

Once you've validated and started building, it’s easy to stop doing interviews and focus on shipping. But the assumptions don’t stop just because you’re coding.

Pre-build validation looks at problem existence and solution resonance. You’re testing whether the pain is real and whether your proposed solution resonates before writing any code.

MVP validation moves to usability and value delivery. Can users actually get value from what you’ve built? Do they return after the first session? Here, you’re validating that your solution works in practice, not just in theory.

Post-launch validation is about scaling and iteration. Do the unit economics hold as you grow? Which features really keep users, and which ones don’t?

Agile development supports this continuous validation through short iteration cycles and frequent user feedback. As you scale, real usage data replaces interview insights - behavioral analytics, A/B tests, and cohort analysis let you validate at scale what you initially validated through conversation.

How Idea Maker Helps Teams Validate App Ideas the Right Way

Most founders know they should validate before building. The challenge is knowing which assumptions to test first, how to design experiments that produce a reliable signal, and when the data is strong enough to move forward. Idea Maker partners with startups and product teams to build that rigor into every stage of development.

| Product Strategy | Problem definition, hypothesis mapping, validation roadmaps |

| User Research | Interview design, recruitment, synthesis |

| Rapid Prototyping | Clickable prototypes for testing before development |

| Landing Page Tests | Conversion-optimized pages with analytics |

| MVP Development | Products built for learning, not just launching |

| Analytics Setup | Instrumentation for ongoing validation |

Validation is easier when you've done it before. You learn which signals matter, which objections are real blockers, and when the data is strong enough to act on. If you're considering validating an app idea, reach out to discuss next steps.

Final Thoughts

Nobody sets out to build something nobody wants. But without validation, that's exactly what happens to a third of startups. What matters most is whether you took time to test your assumptions.

Today, with AI making it easier to ship quickly, it’s possible to build fast and still build the wrong thing. The real cost is the time spent going in the wrong direction. Build with evidence - in an era of commodity AI, unique value is found in solving validated, high-pain problems.